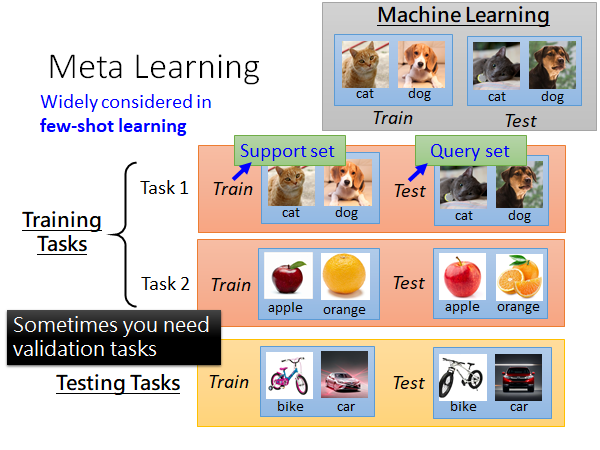

It can be quickly adapted to a new related task using only a few labeled data and a few or even a single gradient step. Meta-learning aims to find optimal parameters initialization from this distribution. Now let us have the distribution over similar tasks p(T). Using gradient descent steps, we can find optimal parameters that minimize the loss function for the given task. Suppose we are dealing with a supervised classification task T_1, and our model is f_θ with randomly initialized parameters θ. Model-agnostic means that you can apply MAML to any model trained via gradient descent. We do it with only a couple number of gradient steps and a small amount of labeled data. That way, when given a new task, we can quickly and easily train a good model.

MAML finds the initialization of model parameters. One of the notorious meta-learning algorithms is Model-Agnostic Meta-Learning (MAML). Few categorizations of meta-learning can be found in the following surveys. Moreover, it is only a subset of a wide variety of meta-learning. You should note that meta-learning methods are not limited to finding the best initial parameters. The next step is to answer the question, how can we find better initial parameters based on the information obtained? And the more interesting question is how to find such initial parameters that it would be easy to adapt them to the utterly new task using only a small number of training samples? There are many meta-learning methods for this, some of which we will discuss in the article. Using the validation errors (green lines on Fig 3.) of all tasks, we can measure how good the initial parameters θ were. The smaller the error, the easier it is for the task to adapt initial parameters θ to its data. Then, we evaluate models on their validation datasets. We'll train its model on its own dataset for each task, using random initial parameters θ as a starting point. Initial parameters θ are shared across all tasks. Going back to our example, let's say we have no pre-trained on the ImageNet initial parameters, but instead, we've randomly initialized them. In contrast, the conventional machine learning model gains knowledge only from data samples.

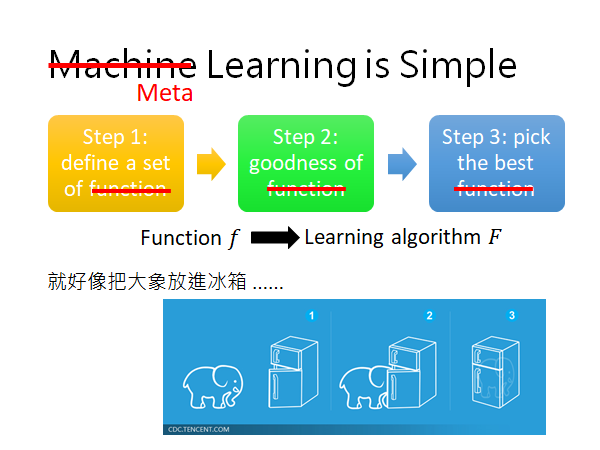

Meta-learning provides an alternative paradigm where a machine learning model gains experience over multiple learning episodes – often covering the distribution of related tasks – and uses this experience to improve its future learning performance. Let's look at another approach for training models on a small amount of data - meta-learning. However, the data on which the model has been pre-trained must have something in common with our data. So we take a model pre-trained on the large ImageNet dataset and finetune it to each given task. The problem is a critical lack of data: from 5 to 10 samples for each job. In transfer learning, we take a model trained on one or more large datasets and use it as starting points for training models on our small datasets.įor instance, we were asked to develop models to solve several medical tasks: task 1 - сlassification of tumors into benign and malignant on the image, task 2 - classify thoracic abnormalities from chest radiographs, task 3 - breast cancer detection, and so on. The question is how to obtain a good ML model in a situation when data is intrinsically rare or expensive or compute resources are unavailable? The standard answer is to use transfer learning. Such areas also have enormous compute resources available. Thereby, the success of ML models has been mainly in areas where a vast amount of data can be collected or simulated. As opposed to humans, machine learning algorithms typically require a lot of examples to perform well. The ability to learn and adapt quickly from a small number of examples is one of the most essential abilities of humans and intelligence in general. To put it simply, we discover how to learn with experience. The more experienced and skilled we are, the faster and easier it is for us to learn something new. We use the skills we learned earlier when solving related problems as well as previously worked well approaches. What important is that we are not learning them entirely from scratch but actively using past experiences. Humans acquire many various concepts and skills over life.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed